Natural Language Processing (NLP) is one of the most exciting areas of artificial intelligence. It allows machines to understand, process, and analyze human language. But before a machine learning model can understand text, the text must go through several preprocessing steps.

One of the most important preprocessing tasks is text normalization. Words often appear in different forms, and machines treat them as completely separate tokens.

For example, consider these words:

connect

connected

connecting

connection

Humans instantly understand that these words share the same root meaning. However, a computer might treat them as four different words.

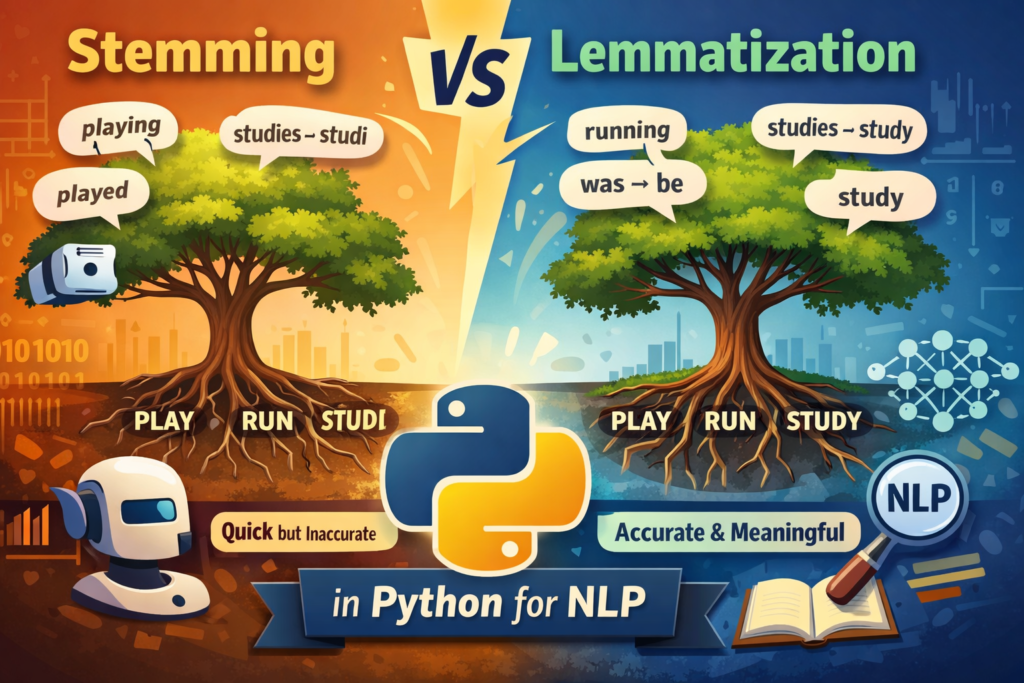

This is where stemming and lemmatization come into play.

Both techniques reduce words to their base form so that NLP models can process text more effectively. If you are learning NLP with Python, understanding Stemming vs Lemmatization in Python is an essential step.

In this beginner-friendly guide, you will learn:

- What stemming is

- What lemmatization is

- Key differences between the two techniques

- How to implement them in Python

- When to use each method in real NLP projects

By the end of this guide, you will clearly understand Stemming vs Lemmatization in Python for NLP and know how to use them in your own projects.

These techniques are an important part of text preprocessing in Python for NLP, which prepares raw text for machine learning models.

Why Text Normalization Is Important in NLP

Before diving into Stemming vs Lemmatization in Python, it’s important to understand why text normalization matters.

When working with text data, machines see words as tokens. If a dataset contains multiple variations of the same word, the vocabulary size increases unnecessarily.

For example:

run

running

runs

ran

These all represent the same concept, but without normalization they appear as separate tokens.

This creates several problems:

- Larger vocabulary size

- Increased computational cost

- Reduced model accuracy

- Difficulty identifying patterns

Text normalization techniques solve this problem by converting different word forms into a single base form.

Two common normalization techniques are:

- Stemming

- Lemmatization

Understanding Stemming vs Lemmatization in Python helps you decide which method works best for your NLP pipeline.

What is Stemming in NLP?

Stemming is a text normalization technique that removes suffixes or prefixes from words to produce a root form called a stem.

The goal of stemming is not to produce a real word. Instead, it focuses on quickly reducing words to a common base.

For example:

| Word | Stem |

|---|---|

| playing | play |

| played | play |

| studies | studi |

| connection | connect |

Notice something important here.

The word “studies” becomes “studi.”

The word “studi” is not a real English word.

This happens because stemming uses rule-based algorithms instead of dictionaries. The algorithm simply strips suffixes such as ing, ed, or ies without checking whether the resulting word exists.

This is one of the key characteristics when comparing Stemming vs Lemmatization in Python.

Advantages of Stemming

Stemming has several benefits:

- Very fast

- Simple to implement

- Works well for large datasets

- Useful in search engines and information retrieval systems

Disadvantages of Stemming

However, stemming also has limitations:

- Can produce incorrect words

- Does not understand context

- Lower linguistic accuracy

Because of these limitations, stemming is typically used when speed matters more than linguistic accuracy.

Stemming Example in Python Using NLTK

Now let’s implement stemming in Python using the NLTK library, one of the most popular NLP libraries.

First install NLTK if necessary:

pip install nltk

Now try the following example.

from nltk.stem import PorterStemmerstemmer = PorterStemmer()words = ["playing", "played", "studies", "connection"]for word in words:

print(word, "->", stemmer.stem(word))

Expected output:

playing -> play

played -> play

studies -> studi

connection -> connect

This example clearly demonstrates how stemming removes suffixes.

The PorterStemmer algorithm is one of the most commonly used stemming algorithms in NLP.

Other popular stemmers include:

- Lancaster Stemmer

- Snowball Stemmer

Among these, PorterStemmer is usually preferred because it provides a good balance between speed and accuracy.

Understanding how stemming works is a critical part of learning Stemming vs Lemmatization in Python.

The popular NLTK documentation provides many tools for natural language processing in Python.

What is Lemmatization in NLP?

Lemmatization is another text normalization technique that reduces words to their dictionary base form, known as a lemma.

Unlike stemming, lemmatization uses linguistic knowledge and vocabulary databases to determine the correct base form of a word.

For example:

| Word | Lemma |

|---|---|

| running | run |

| better | good |

| studies | study |

| was | be |

Notice that the results are real English words.

This is the biggest difference between Stemming vs Lemmatization in Python.

While stemming focuses on speed and simplicity, lemmatization focuses on accuracy and linguistic correctness.

In linguistics, lemmatization refers to reducing words to their dictionary base form.

Advantages of Lemmatization

Lemmatization offers several advantages:

- Produces real dictionary words

- More accurate results

- Preserves word meaning

- Better for machine learning models

Disadvantages of Lemmatization

However, lemmatization also has trade-offs:

- Slower than stemming

- Requires linguistic resources

- More computationally expensive

Despite being slower, lemmatization is often preferred in modern NLP systems because it maintains semantic meaning.

Lemmatization Example in Python Using NLTK

Now let’s look at how to perform lemmatization using Python.

NLTK provides a tool called WordNetLemmatizer.

However, beginners often encounter an important issue when first trying this method.

If you run lemmatization without specifying the part-of-speech (POS) tag, the output may not change.

Example:

from nltk.stem import WordNetLemmatizerlemmatizer = WordNetLemmatizer()print(lemmatizer.lemmatize("running"))Output:

running

Why does this happen?

WordNet assumes the word is a noun by default.

But “running” in this context is actually a verb, so the lemmatizer does not modify it.

To get the correct lemma, you must specify the POS tag.

Example with POS tag:

lemmatizer.lemmatize("running", pos="v")Output:

run

This small detail is extremely important when implementing Stemming vs Lemmatization in Python.

Many beginners mistakenly believe lemmatization is not working when the real issue is missing POS tags.

spaCy vs NLTK for Lemmatization

Many beginners start with NLTK, but modern NLP workflows often use spaCy.

Both libraries support lemmatization, but they differ in several ways.

| Feature | NLTK | spaCy |

|---|---|---|

| Learning curve | Beginner friendly | Slightly advanced |

| POS tagging | Manual | Automatic |

| Lemmatization | WordNet based | More accurate |

| Performance | Slower | Faster |

One advantage of spaCy is that it automatically performs POS tagging, which improves lemmatization accuracy.

Example using spaCy:

import spacynlp = spacy.load("en_core_web_sm")doc = nlp("The cats are running faster")for token in doc:

print(token.text, token.lemma_)Example output:

The the

cats cat

are be

running run

faster fast

Because spaCy performs linguistic analysis internally, it often produces more accurate results.

When learning Stemming vs Lemmatization in Python, it is useful to experiment with both NLTK and spaCy.

The spaCy NLP library is widely used in modern NLP pipelines because of its fast and accurate linguistic processing.

Stemming vs Lemmatization in Python: Key Differences

Now that you understand both techniques, let’s directly compare Stemming vs Lemmatization in Python.

| Feature | Stemming | Lemmatization |

|---|---|---|

| Speed | Very fast | Slower |

| Accuracy | Lower | Higher |

| Output | May produce non-words | Real dictionary words |

| Method | Rule-based | Dictionary + linguistic analysis |

| Complexity | Simple | More complex |

When speed is important

Stemming is often used in applications like:

- Search engines

- Information retrieval

- Keyword matching systems

When meaning matters

Lemmatization is preferred in applications such as:

- Chatbots

- Sentiment analysis

- Text classification

- Machine learning models

Understanding these differences helps developers choose the right approach when building NLP pipelines.

Stemming vs Lemmatization Performance Comparison

In large NLP datasets, performance can matter.

Example benchmark (conceptual):

| Method | Speed | Accuracy |

|---|---|---|

| Stemming | Very Fast | Medium |

| Lemmatization | Slower | High |

If your dataset contains millions of documents, stemming may be preferred for faster preprocessing.

However, for machine learning and AI applications, lemmatization often provides better results.

Example: Stemming vs Lemmatization Comparison

Let’s compare both methods using a real sentence.

Sentence:

The cats are running faster than the dogs

Stemming result

The cats are running faster than the dogs

Here, the stemmer removes plural endings and suffixes.

Lemmatization result (with POS tagging)

cat are running faster than dog

NLTK’s WordNet lemmatizer assumes noun by default, so:

- running stays running

- faster stays faster

- are stays are

Lemmatization Result (With POS Tags)

cat be run fast than dog

When POS tagging is applied:

- cats → cat

- are → be

- running → run

- faster → fast

This demonstrates how POS tagging improves lemmatization accuracy.

When Should You Use Stemming?

Stemming works best in situations where speed and efficiency are more important than linguistic precision.

Common use cases include:

Search engines

Search systems often use stemming to match queries with documents.

For example:

User searches for:

connect

The system should also find:

- connecting

- connected

- connection

Stemming helps group these variations together.

Information retrieval systems

Large datasets require fast preprocessing. Stemming allows quick normalization of millions of words.

Keyword matching

Stemming can improve keyword matching in search and recommendation systems.

Because stemming is computationally lightweight, it works well for large-scale text processing.

When Should You Use Lemmatization?

Lemmatization is better suited for tasks where word meaning and context matter.

Common use cases include:

Sentiment analysis

Understanding sentiment requires accurate word forms.

For example:

better → good

This transformation helps models detect positive sentiment.

Chatbots and conversational AI

Chatbots must interpret user input accurately. Lemmatization helps normalize different word forms while preserving meaning.

Machine learning models

Accurate word normalization improves model performance in tasks such as:

- text classification

- topic modeling

- recommendation systems

NLP research projects

Most modern NLP pipelines prefer lemmatization because it provides linguistically meaningful outputs.

When deciding between Stemming vs Lemmatization in Python, consider whether your project prioritizes speed or accuracy.

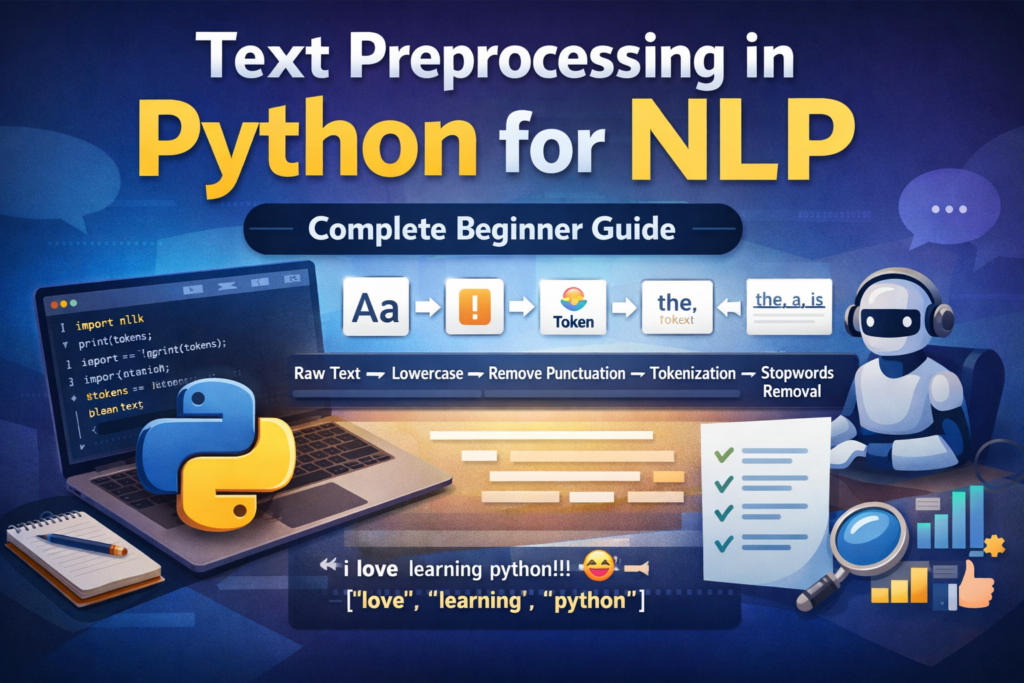

Where Stemming and Lemmatization Fit in an NLP Pipeline

Both techniques are typically part of the text preprocessing pipeline.

A typical NLP workflow looks like this:

Raw Text

↓

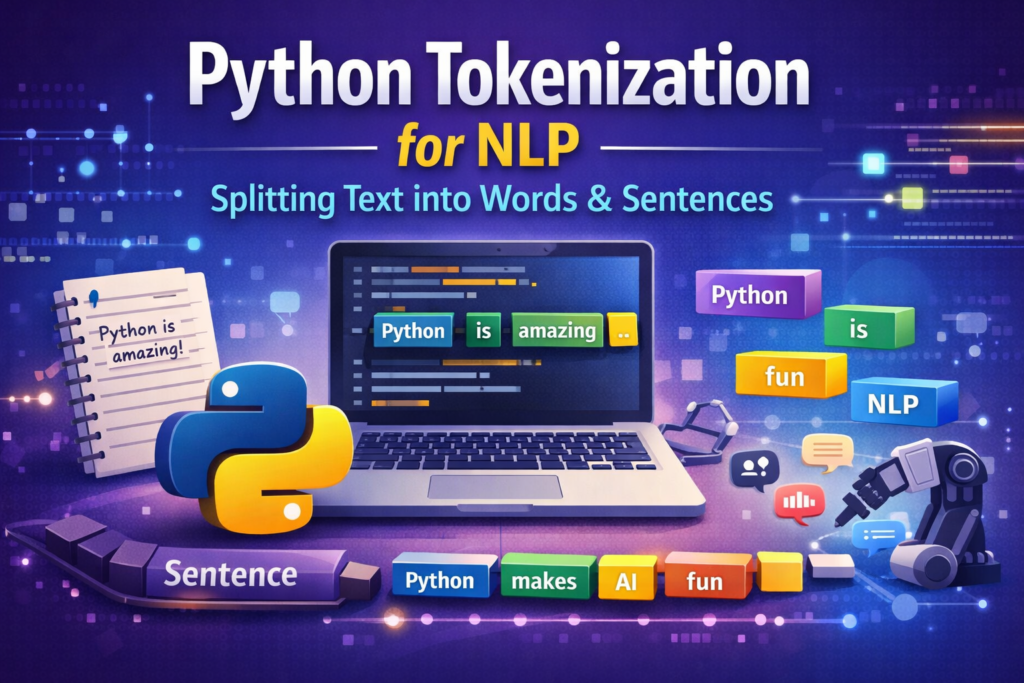

Tokenization

↓

Stopword Removal

↓

Stemming / Lemmatization

↓

Vectorization

↓

Machine Learning Model

Each step helps transform raw text into structured data that machine learning algorithms can process.

In most real-world NLP projects, normalization plays a crucial role in improving model performance.

Before applying stemming or lemmatization, text must first be split into words using tokenization in NLP.

Installing Required Python Libraries

Before running the examples in this guide, you need to install the required NLP libraries.

Install NLTK:

pip install nltk

Download required datasets:

import nltk

nltk.download('wordnet')

nltk.download('omw-1.4')

If you want to experiment with spaCy:

pip install spacy

python -m spacy download en_core_web_sm

This ensures all code examples in this tutorial run correctly.

Example: Using Lemmatization in a Sentiment Analysis Pipeline

In real NLP projects, text normalization helps machine learning models understand patterns better.

Example text dataset:

"I am loving this product"

"I loved this product"

"I love this product"

After lemmatization:

I be love this product

I love this product

I love this product

Now the machine learning model treats these sentences more consistently.

This improves:

- feature extraction

- model accuracy

- training efficiency

Best Practices for Text Normalization in NLP

When working with text preprocessing in Python, follow these best practices:

1️⃣ Use lemmatization for machine learning tasks

2️⃣ Use stemming for search engines or keyword matching

3️⃣ Always apply tokenization before normalization

4️⃣ Combine normalization with stopword removal

5️⃣ Test your preprocessing pipeline on sample data before training models.

Common Beginner Mistakes

When learning Stemming vs Lemmatization in Python, beginners often make a few common mistakes.

Mistake 1: Ignoring POS tags

Without POS tagging, lemmatization may produce incorrect results.

Always specify POS tags when accuracy matters.

Mistake 2: Using stemming for meaning-sensitive tasks

If your application requires linguistic accuracy, stemming may not be suitable.

Mistake 3: Applying both techniques together

Some beginners mistakenly apply stemming and lemmatization sequentially.

In most cases, you should choose one method, not both.

Understanding these mistakes helps you build better NLP workflows.

Conclusion

Text normalization is a crucial step in natural language processing.

Two of the most common normalization techniques are stemming and lemmatization.

In this guide, you learned the differences between Stemming vs Lemmatization in Python.

Stemming is:

- faster

- rule-based

- less accurate

Lemmatization is:

- slower

- linguistically informed

- more accurate

While stemming works well for search engines and information retrieval systems, lemmatization is often preferred for modern NLP applications such as chatbots, sentiment analysis, and machine learning models.

If you are just starting your NLP journey, experimenting with both techniques in Python will help you better understand how text preprocessing works.

Mastering these concepts is an important step toward building powerful AI and NLP systems.

If you want to apply these techniques in a real project, try this tutorial on how to build an AI text analyzer with Python.

FAQ

What is stemming in NLP?

Stemming is a technique that removes suffixes from words to produce a root form called a stem. It is fast but may produce non-dictionary words.

What is lemmatization in Python?

Lemmatization is a normalization technique that converts words into their dictionary base form using linguistic knowledge.

Which is better: stemming or lemmatization?

It depends on the application. Stemming is faster, while lemmatization is more accurate and linguistically meaningful.

Which Python libraries support lemmatization?

Popular Python libraries include:

NLTK

spaCy

Do machine learning models require stemming?

Not necessarily, but normalization techniques like stemming or lemmatization often improve NLP model performance.